Friday, April 3, 2026

Accuracy test for protein language models shines light into AI 'black box'

How the brain charts emotion in a map-like way

Wednesday, January 14, 2026

'Periodic table' for AI methods aims to drive innovation

Wednesday, January 7, 2026

Unlocking design secrets of deep-sea microbes

The microbe Pyrodictium abyssi is an archaeaon — a member of what’s known as the third domain of life — and an extremophile. It lives in deep-sea thermal vents, at temperatures above the boiling point of water, without light or oxygen, withstanding the enormous pressure at ocean depths of thousands of meters.

A biomatrix of tiny tubes of protein, known as cannulae, link cells of Pyrodictium abyssi together into a highly stable microbial community. No one knew how these single-celled microbes accomplished this feat of extreme engineering — until now.

A study using advanced microscopy techniques reveals new details about the elegant design of the cannulae and the remarkable simplicity of their method of construction. Nature Communications published the work, led by scientists at Emory University; the University of Virginia, Charlottesville; and Vrije Universiteit Brussel in Belgium.

The discovery holds the potential to inspire innovations in biotechnology, from the development of new “smart” materials to nanoscale drug delivery systems.

“Not only are the cannulae strong enough to endure extreme conditions, they’re beautiful,” says Vincent Conticello, Emory professor of chemistry and co-senior author of the paper. “To me, they resemble columns from the classical architecture of ancient Greece or Rome,” he adds, citing their fluted edges and precise regularity.

Related:

Tuesday, October 28, 2025

Electric charge connects jumping worm to prey

Tuesday, August 12, 2025

AI reveals new physics in dusty plasma

Physicists used a machine-learning method to identify surprising new twists on the non-reciprocal forces governing a many-body system.

Wednesday, July 2, 2025

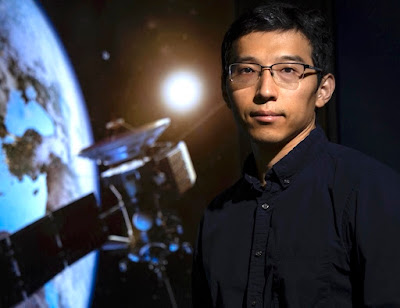

Exploring the frontiers of data science

As a high-tech geographer, Xiao Huang uses remove sensing and AI for insights into how to design more equitable cities, improve management of natural resources, lessen the impact of natural and human-caused disasters, and improve public health policies.

"I love geography and computer technology," says Huang, assistant professor in Emory's Department of Environmental Sciences. "I want to use my knowledge of these fields to help humanity, especially socially disadvantaged communities."

Related:

Developing a new approach to control a dangerous urban mosquito in Ethiopia

Friday, June 27, 2025

New AI tool supports best practices to prevent spread of dangerous C. diff infections

Friday, May 2, 2025

Developing a new approach to control a dangerous, invasive mosquito in Ethiopia

Tuesday, April 15, 2025

New AI tool set to speed quest for advanced superconductors

The study was led by theorists at Emory University and experimentalists at Yale University. Senior authors include Fang Liu and Yao Wang, assistant professors in Emory’s Department of Chemistry, and Yu He, assistant professor in Yale’s Department of Applied Physics.

The team applied machine-learning techniques to detect clear spectral signals that indicate phase transitions in quantum materials — systems where electrons are strongly entangled. These materials are notoriously difficult to model with traditional physics because of their unpredictable fluctuations.

“Our method gives a fast and accurate snapshot of a very complex phase transition, at virtually no cost,” says Xu Chen, the study’s first author and an Emory PhD student in chemistry. “We hope this can dramatically speed up discoveries in the field of superconductivity.”

One of the challenges in applying machine learning to quantum materials is the lack of sufficient high-quality experimental data needed to train models. To overcome this, the researchers used high-throughput simulations to generate large amounts of data. They then combined these simulation results with just a small amount of experimental data to create a powerful and efficient machine-learning framework.

Read more about the discovery.

Related:

Monday, April 7, 2025

Chatbot opens computational chemistry to nonexperts

Advanced computational software is streamlining quantum chemistry research by automating many of the processes of running molecular simulations. The complicated design of these software packages, however, often limits their use to theoretical chemists trained in specialized computing techniques.

Wednesday, March 5, 2025

Atlanta Science Festival set to entertain, inspire and engage all ages

By Carol Clark

Atlanta Science Festival returns March 8-22, with more than 100 events throughout the metro area, inviting the public to join fun, interactive and educational experiences. The acclaimed city-wide celebration, one of the largest of its kind in the country, showcases the myriad science, technology engineering and mathematics (STEM) innovations happening in Atlanta, including at Emory.Tuesday, January 7, 2025

Bittersweet secrets of the fruit fly brain

The sense of taste carries evolutionary benefits key to survival. A sweet taste, for instance, signals energy-dense nutrients important to animals foraging for food — including humans. A bitter taste may warn of a toxic substance.

Wednesday, March 6, 2024

Atlanta Science Festival returns to inspire discovery for all ages

Wednesday, January 24, 2024

Computer scientists create simple method to speed cache sifting

Thursday, October 19, 2023

Math trio makes new points about size of the smallest triangle

Monday, August 7, 2023

Physicists open new path to exotic form of superconductivity

By Carol Clark

Physicists have identified a mechanism for the formation of oscillating superconductivity known as pair-density waves. Physical Review Letters published the discovery, which provides new insight into an unconventional superconductive state seen in certain materials, including high-temperature superconductors.

“We discovered that structures known as Van Hove singularities can produce modulating, oscillating states of superconductivity,” says Luiz Santos, assistant professor of physics at Emory University and senior author of the study. “Our work provides a new theoretical framework for understanding the emergence of this behavior, a phenomenon that is not well understood.”

First author of the study is Pedro Castro, an Emory physics graduate student. Co-authors include Daniel Shaffer, a postdoctoral fellow in the Santos group, and Yi-Ming Wu from Stanford University.

The work was funded by the U.S. Department of Energy’s Office of Basic Energy Sciences.

The puzzle of superconductivity

Santos is a theorist who specializes in condensed matter physics. He studies the interactions of quantum materials — tiny things such as atoms, photons and electrons — that don’t behave according to the laws of classical physics.

Superconductivity, or the ability of certain materials to conduct electricity without energy loss when cooled to a super-low temperature, is one example of intriguing quantum behavior. The phenomenon was discovered in 1911 when Dutch physicist Heike Kamerlingh Onnes showed that mercury lost its electrical resistance when cooled to 4 Kelvin or minus 371 degrees Fahrenheit. That’s about the temperature of Uranus, the coldest planet in the solar system.

It took scientists until 1957 to come up with an explanation for how and why superconductivity occurs. At normal temperatures, electrons roam more or less independently. They bump into other particles, causing them to shift speed and direction and dissipate energy. At low temperatures, however, electrons can organize into a new state of matter.

“They form pairs that are bound together into a collective state that behaves like a single entity,” Santos explains. “You can think of them like soldiers in an army. If they are moving in isolation they are easier to deflect. But when they are marching together in lockstep it’s much harder to destabilize them. This collective state carries current in a robust way.”

A holy grail of physics

Superconductivity holds huge potential. In theory, it could allow for electric current to move through wires without heating them up, or losing energy. These wires could then carry far more electricity, far more efficiently.

“One of the holy grails of physics is room-temperature superconductivity that is practical enough for everyday-living applications,” Santos says. “That breakthrough could change the shape of civilization.”

Many physicists and engineers are working on this frontline to revolutionize how electricity gets transferred.

Meanwhile, superconductivity has already found applications. Superconducting coils power electromagnets used in magnetic resonance imaging (MRI) machines for medical diagnostics. A handful of magnetic levitation trains are now operating in the world, built on superconducting magnets that are 10 times stronger than ordinary electromagnets. The magnets repel each other when the matching poles face each other, generating a magnetic field capable of levitating and propelling a train.

The Large Hadron Collider, a particle accelerator that scientists are using to research the fundamental structure of the universe, is another example of technology that runs through superconductivity.

Superconductivity continues to be discovered in more materials, including many that are superconductive at higher temperatures.

An accidental discovery

One focus of Santos’ research is how interactions between electrons can lead to forms of superconductivity that cannot be explained by the 1957 description of superconductivity. An example of this so-called exotic phenomenon is oscillating superconductivity, when the paired electrons dance in waves, changing amplitude.

In an unrelated project, Santos asked Castro to investigate specific properties of Van Hove singularities, structures where many electronic states become close in energy. Castro’s project revealed that the singularities appeared to have the right kind of physics to seed oscillating superconductivity.

That sparked Santos and his collaborators to delve deeper. They uncovered a mechanism that would allow these dancing-wave states of superconductivity to arise from Van Hove singularities.

“As theoretical physicists, we want to be able to predict and classify behavior to understand how nature works,” Santos says. “Then we can start to ask questions with technological relevance.”

Some high-temperature superconductors — which function at temperatures about three times as cold as a household freezer — have this dancing-wave behavior.

The discovery of how this behavior can emerge from Van Hove singularities provides a foundation for experimentalists to explore the realm of possibilities it presents.

“I doubt that Kamerlingh Onnes was thinking about levitation or particle accelerators when he discovered superconductivity,” Santos says. “But everything we learn about the world has potential applications.”

Related:

Wednesday, July 26, 2023

Merck Prize boosts work on air sensor for pandemic pathogens

Merck KGaA, Darmstadt, Germany, awarded its 2023 Future Insight Prize to Khalid Salaita, professor of chemistry at Emory University. The award comes with $540,000 to fund the next phase of research into an air sensor that can continuously monitor indoor spaces for pathogens that can cause pandemics.

“I’m extremely thankful to receive the Future Insight Prize as this enables us to continue our path toward an early-warning system for emerging threats,” Salaita says. “Our research sets the stage for fully automated detection of airborne pathogens without human intervention or sample processing.”

The Merck Future Insight Prize recognizes groundbreaking ideas to solve some of the world’s most pressing challenges in health, nutrition and energy.

The Salaita lab’s sensor, a rolling micro-motor called “Rolosense,” holds the potential to help mitigate, or even prevent, a pandemic.

Wednesday, March 22, 2023

As the worm turns: New twists in behavioral association theories

By Carol Clark

Physicists have developed a dynamical model of animal behavior that may explain some mysteries surrounding associative learning going back to Pavlov’s dogs. The Proceedings of the National Academy of Sciences (PNAS) published the findings, based on experiments on a common laboratory organism, the roundworm C. elegans.

“We showed how learned associations are not mediated by just the strength of an association, but by multiple, nearly independent pathways — at least in the worms,” says Ilya Nemenman, an Emory professor of physics and biology whose lab led the theoretical analyses for the paper. “We expect that similar results will hold for larger animals as well, including maybe in humans.”

“Our model is dynamical and multi-dimensional,” adds William Ryu, an associate professor of physics at the Donnelly Centre at the University of Toronto, whose lab led the experimental work. “It explains why this example of associative learning is not as simple as forming a single positive memory. Instead, it’s a continuous interplay between positive and negative associations that are happening at the same time.”

First author of the paper is Ahmed Roman, who worked on the project as an Emory graduate student and is now a postdoctoral fellow at the Broad Institute. Konstaintine Palanski, a former graduate student at the University of Toronto, is also an author.

The conditioned reflex

More than 100 years ago, Ivan Pavlov discovered the “conditioned reflex” in animals through his experiments on dogs. For example, after a dog was trained to associate a sound with the subsequent arrival of food, the dog would start to salivate when it heard the sound, even before the food appeared.

About 70 years later, psychologists built on Pavlov’s insights to develop the Rescorla-Wagner model of classical conditioning. This mathematical model describes conditioned associations by their time-dependent strength. That strength increases when the conditioned stimulus (in Pavlov dog’s case the sound) can be used by the animal to decrease the surprise in the arrival of the unconditioned response (the food).

Such insights helped set the stage for modern theories of reinforcement learning in animals, which in turn enabled reinforcement learning algorithms in artificial intelligence systems. But many mysteries remain, including some related to Pavlov’s original experiments.

After Pavlov trained dogs to associate the sound of a bell with food he would then repeatedly expose them to the bell without food. During the first few trials without food, the dogs continued to salivate when the bell rang. If the trials continued long enough, the dogs “unlearned” and stopped salivating in response to the bell. The association was said to be “extinguished.”

Pavlov discovered, however, that if he waited a while and then retested the dogs, they would once again salivate in response to the bell, even if no food was present. Neither Pavlov nor more recent associative-learning theories could accurately explain or mathematically model this spontaneous recovery of an extinguished association.

Teasing out the puzzle

Researchers have explored such mysteries through experiments with C. elegans. The one-millimeter roundworm only has about 1,000 cells and 300 of them are neurons. That simplicity provides scientists with a simple system to test how the animal learns. At the same time, C. elegans’ neural circuitry is just complicated enough to connect some of the insights gained from studying its behavior to more complex systems.

Earlier experiments have established that C. elegans can be trained to prefer a cooler or warmer temperature by conditioning it at a certain temperature with food. In a typical experiment, the worms are placed in a petri dish with a gradient of temperatures but no food. Those trained to prefer a cooler temperature will move to the cooler side of the dish, while the worms trained to prefer a warmer temperature go to the warmer side.

But what exactly do these result mean? Some believe that the worms crawl toward a particular temperature in expectation of food. Others argue that the worms simply become habituated to that temperature, so they prefer to hang out there even without a food reward.

The puzzle could not be resolved due to a major limitation of many of these experiments — the lengthy amount of time it takes for a worm to traverse a nine-centimeter petri dish in search of the preferred temperature.

Measuring how learning changes over time

Nemenman and Ryu sought to overcome this limitation. They wanted to develop a practical way to precisely measure the dynamics of learning, or how learning changes over time.

Ryu’s lab used a microfluidic device to shrink the experimental model of nine-centimeter petri dishes into four-millimeter droplets. The researchers could rapidly run experiments on hundreds of worms, each worm encased within its individual droplet.

“We could observe in real time how a worm moved across a linear gradient of temperatures,” Ryu says. “Instead of waiting for it to crawl for 30 minutes or an hour, we could much more quickly see which side of the droplet, the cold side or the warm side, that the worm preferred. And we could also follow how its preferences changed with time.”

Their experiments confirmed that if a worm is trained to associate food with a cooler temperature it will move to the cooler side of the droplet. Over time, however, with no food present, this memory preference seemingly decays.

“We found that suddenly the worms wanted to spend more time on the warm side of the droplet,” Ryu says. “That’s surprising because why would the worms develop a different preference and even avoidance of the temperature they had come to associate with food?”

Eventually the worm begins moving back and forth between the cooler and warmer temperatures. The researchers hypothesized that the worm does not simply forget the positive memory of food associated with cooler temperatures but instead starts to negatively associate the cooler side with no food. That spurs it to head for the warmer side. Then as more time passes, it begins to form a negative association of no food with the warmer temperature, which combined with the residual positive association to the cold, makes it migrate back to the cooler one.

“The worm is always learning, all the time,” Ryu explains. “There is an interplay between the drive of a positive association and a negative association that causes it to start oscillating between cold and warm.”

'It's like when you lose your keys'

Nemenman’s team developed theoretical equations to describe the interactions over time between the two independent variables — the positive, or excitatory, association that drives a worm toward one temperature and the negative, or inhibitory, association that drives it away from that temperature.

“The side that the worm gravitates toward depends on when exactly you take the measurements,” Nemenman explains. “It’s like when you lose your keys you may check the desk where you usually keep them first. If you don’t see them there right away, you run around different places looking for them. If you still don’t find them, you go back to the original desk figuring you just didn’t look hard enough.”

The researchers repeated the experiments under different conditions. They trained the worms at different starting temperatures and starved them for different durations before testing their temperature preference, and the worms’ behaviors were correctly predicted by the equations.

They also tested their hypothesis by genetically modifying the worms, knocking out the insulin-like signaling pathway known to serve as a negative association pathway.

“We perturbed the biology in specific ways and when we ran the experiments, the worm’s behavior changed as predicted by our theoretical model,” Nemenman says. “That gives us more confidence that the model reflects the underlying biology of learning, at least in C. elegans.”

The researchers hope that others will test their model in studies of larger animals across species.

“Our model provides an alternative quantitative model of learning that is multi-dimensional,” Ryu says. “It explains results that are difficult, or in some cases impossible, for other theories of classical conditioning to explain.”

Related:

Physicists develop theoretical model for neural activity of mouse brain

Machine learning used to understand and predict dynamics of worm behavior

Thursday, November 17, 2022

New chemistry toolkit speeds analyses of molecules in solution

By Carol Clark

A new open-source toolkit automates the process of computing molecular properties in the solution phase, clearing new pathways for artificial-intelligence design and discovery in chemistry and beyond. The Journal of Chemical Physics published the free, open-source toolkit developed by theoretical chemists at Emory University.

Known as AutoSolvate, the toolkit can speed the creation of large, high-quality datasets needed to make advances in everything from renewable energy to human health. “By using our automated workflow, researchers can quickly generate 10, or even 100 times, more data compared to the traditional approach,” says Fang Liu, Emory assistant professor of chemistry and corresponding author of the paper. “We hope that many researchers will access our toolkit to perform high-throughput simulation and data curation for molecules in solution.”

Such datasets, Liu adds, will provide a foundation for applying state-of-the-art machine-learning techniques to drive innovation in a broad range of scientific endeavors.

First author of the paper is Eugen Hruska, a postdoctoral fellow in the Liu lab. Co-authors include Emory PhD candidate Ariel Gale and Xiao Huang, who worked on the paper as an Emory undergraduate and is now a graduate student of chemistry at Duke University.

Exploring the quantum world

A theoretical chemist, Liu leads a team specializing in computational quantum chemistry, including modeling and deciphering molecular properties and reactions in the solution phase.

The world becomes much more complex as it shrinks down to the scale of atoms and small molecules, where quantum mechanics describes the wave-particle duality of energy and matter.

Theoretical chemists use supercomputers to simulate the structures of molecules and the vast array of interactions that can occur during a reaction so that they can make predictions about how a molecule will behave under certain conditions. Understanding these dynamics is key to identifying promising molecules for various applications and for driving reactions efficiently.

Researchers have already generated datasets for the properties of many molecules in the gas phase. Molecular properties in the solution phase, however, remain relatively unexplored in the context of big data and machine learning, despite the fact that most reactions occur in solution.

The problem is that studying a molecule in solution requires much more time and effort.

A complicated process

“In the gas phase, molecules are far from each other,” Liu explains, “so when we study a molecule of interest, we don’t have to consider its neighbors.”

In the solution phase, however, a molecule is closely immersed with many other molecules, making the system much larger. “Imagine a solvent molecule surrounded by layers and layers of water molecules,” Liu says. “Depending on its size and structure, a molecule may be covered by tens, or even up to hundreds, of water molecules. In systems of such large size, the computation will be slow and may not even be feasible.”

Before running a quantum chemistry program for a molecule in the solution phase it’s necessary to first determine the geometry of the molecule and the location and orientation of the surrounding solvent molecules.

“This process is difficult to do,” Liu says. “It takes so much time and effort, and it’s so complicated, that a researcher can only perform this calculation for a few systems that they care about in one paper,” Liu says.

Technical issues can also arise during each step in the process, she adds, leading to errors in the results.

A streamlined solution

Liu and her colleagues replaced the complicated steps required to perform these calculations with their automated system AutoSolvate.

Previously, a computational chemist might have to type hundreds of lines of code into a supercomputer to run a simulation. The command-line interface for AutoSolvate, however, requires just a few lines of code to conduct hundreds of calculations automatically.

“The time for running the simulations may be long, but that’s a job for the computer,” Liu says. “We’ve freed the researchers from most of the tedious, manual tasks of data input so that they can focus on analyzing their results and other creative work.”

In addition to the command-line interface geared toward more experienced theoretical chemists, AutoSolvate includes an intuitive graphical interface that is suitable for graduate students who are learning to run simulations.

Labs can now efficiently generate many data points for solvated molecules and then use the dataset to build machine-learning models for chemical design and discovery. AutoSolvate also makes it easier to build and share datasets across different research groups.

Setting the stage for machine learning

“During the past 10 years, machine learning has become a popular tool for chemistry but the lack of computational datasets has been a bottleneck,” Liu says. “AutoSolvate will allow the research community to curate a huge number of datasets for molecular properties in the solution phase.”

Determining the redox potential of a solvent molecule, or the likelihood for an oxidation to occur, is just one example of a key research area that AutoSolvate could help advance. Redox-active molecules hold potential for applications in the development of anticancer drugs and chemical batteries for renewable-energy storage.

“Building up redox-potential datasets will then allow us to use machine learning to look at millions of different compounds to rapidly find the ones with redox potential within the desired range,” Liu says.

Instead of a black-box result, such analyses of large datasets can yield interpretable artificial intelligence, or basic rules for molecular models.

“The ultimate goal is to identify rules that can then be applied to solve a broad range of fundamental science problems,” Liu says.

The development of AutoSolvate was funded by Emory University with computational resources provided by the National Science Foundation.

Related:

A new spin on computing: Chemist leads $2.9 DOE quest for quantum software

Chemists crack complete quantum nature of water

Chemists map cascade of reactions for producing atmosphere's 'detergeant'